Work Package 3 – Interactions

Enhancing collaboration by addressing challenges in human and robot interactions for trustworthy and effective teamwork.

Human-Robot Interactions addresses joint work and collaboration between people and autonomous systems including the move from classical dyadic interactions between a human user and a robot, to scenarios with heterogenous teams of multiple people and robots.

ROBOTS TO HUMANS

Key challenges of the interaction between robots and humans involve describing what the robot is doing, why it is doing it (e.g., goals) and what the plan is for the future. This requires an explanation of transparent behaviour linking it to WP2. New research into people’s theory of mind (ToM) of autonomous systems can potentially develop an understanding of the robot’s behaviour and goals. This provides mechanisms for understanding interactions with users (WP5) and regulators (WP4).

HUMANS TO ROBOTS

The user requires information to understand how the robot uses input from its sensors and interactions with humans to decide what to do. This information is the step from ‘concept to action’ ensuring that users can convey what the robot must do. This involves exploring and developing a library of instructions from dialogue to ‘programming by example’. This will provide mechanisms for actors to affect the performance of robots and will be important for WP5.

FROM ONE TO MANY

As well as robots interacting with people they are being designed to work in small groups (robot teams) or larger numbers of robots (swarms). It is important to make sure both robot-robot teams and swarms and human-robot teams can achieve the task where possible, can recover from failure and interact in a safe manner etc. In WP3 we will apply formal verification, a mathematical analysis of systems to assess this alongside non-formal verification techniques.

Updates

Research Spotlight – Dr Federico Tavella

Federico is working on foundational models to enhance human-robot interaction and collaboration. These models, when integrated into physical robots, will significantly improve their abilities by providing them with knowledge and enabling them to understand and respond to various types of information. This approach will allow us to move beyond traditional programming methods and utilize natural language to instruct robots on tasks and facilitate their learning. Through the integration of these models, we can create robots that are more capable, adaptable, and intuitive in their interactions with humans.

Research Spotlight – Dr Joseph Bolarinwa

Trust plays a critical role in effective human-robot collaboration, but how can a robot know if a human trusts it? These insights can inform the development of adaptive robots that respond to nonverbal behaviours of humans in real time, where full automation is not feasible or appropriate, such as in a personal care setting. Joseph’s initial study investigated the relationship between human facial action units (AUs) and perceived trust ratings during a maze-solving task with a Furhat robot. Fifty-eight participants engaged in collaborative decision-making while video recordings of their interactions and AU activations were analysed using the OpenFace toolkit. Results showed that specific AU variations reliably signal trust building and trust breaking. Joseph plans to further validate his trust model using a simple industrial process control task, working with the Human Factors and Visual Engineering team at Amentum.

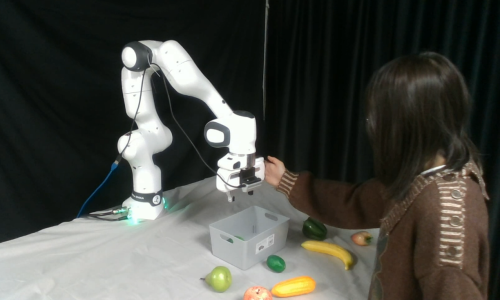

Research Spotlight – Xinyun Chi

Xinyun is working on learning-based robot manipulation, which focus on robot learning from human demonstration with adaptive control, aiming to improve the human-robot interaction by providing more natural and personalized robot assistance based on current user status. By integrating imitation learning-based robot manipulation and adaptive control using real-time human feedback input, this pipeline aims for implementation across diverse industrial scenarios, enabling robots and humans to collaborate with seamless interaction, which will further lead to improved human robot interaction with enhanced human satisfaction and trust.

Research Spotlight – Matthew Rolph

Matthew is working on disambiguation of human instructions using large language models, with aims to enable robots to better comprehend natural language and improve human-robot interactions in ambiguous instruction scenarios. Integrating this into a range of industrial settings, the goal is to enhance collaborative efforts and reduce friction in human-robot collaboration when responding to natural language queries.